AnyFix for Windows – One-Time Purchase/5 Devices

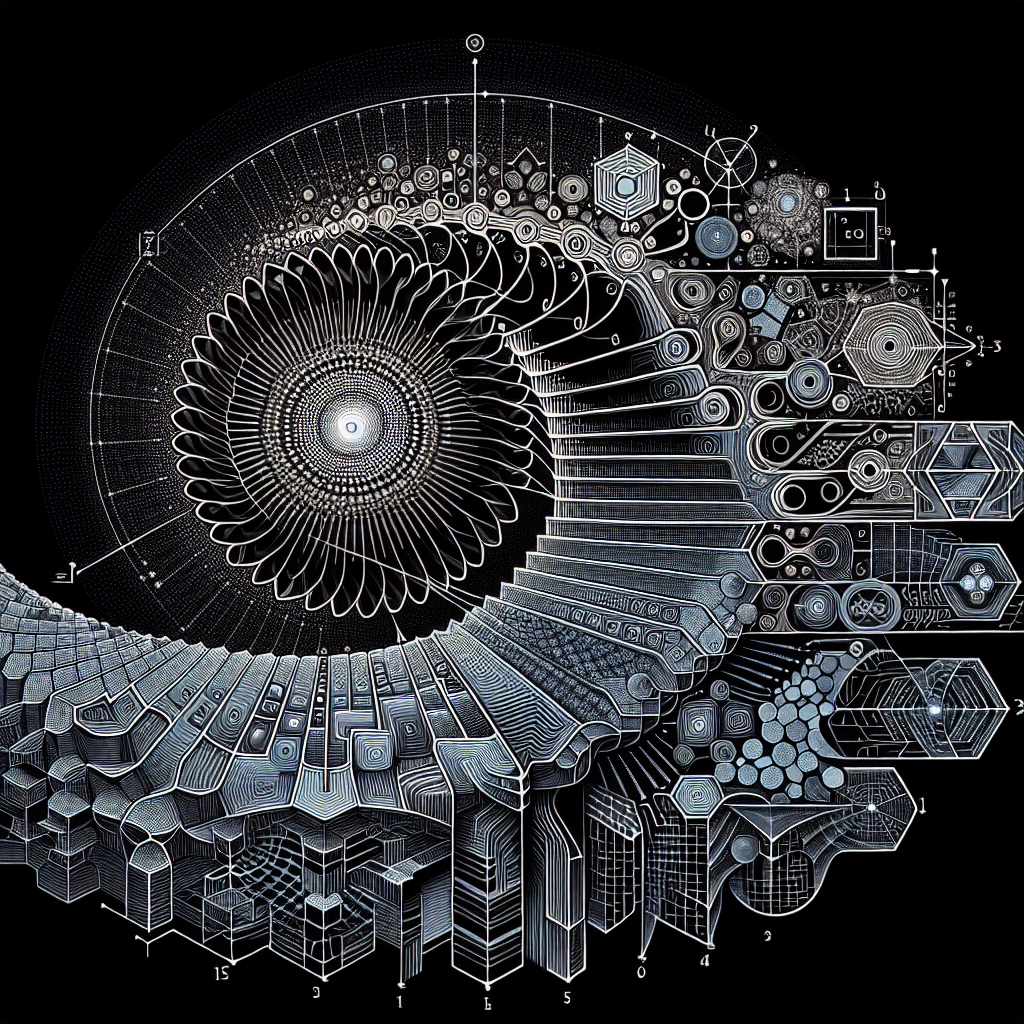

Recurrent Neural Networks (RNNs) are a powerful class of artificial neural networks that are capable of modeling sequential data. They have been used in a wide range of applications, from natural language processing to time series forecasting. However, simple RNNs have certain limitations, such as the vanishing gradient problem, which can make them difficult to train effectively on long sequences.

To address these limitations, researchers have developed more complex architectures known as gated RNNs. These architectures incorporate gating mechanisms that allow the network to selectively update and forget information over time, making them better suited for capturing long-range dependencies in sequential data.

One of the most well-known gated architectures is the Long Short-Term Memory (LSTM) network. LSTMs have been shown to be effective at modeling long sequences and have been used in a wide range of applications. The key innovation of LSTMs is the use of a set of gates that control the flow of information through the network, allowing it to remember important information over long periods of time.

Another popular gated architecture is the Gated Recurrent Unit (GRU). GRUs are similar to LSTMs but have a simpler architecture with fewer parameters, making them easier to train and more computationally efficient. Despite their simplicity, GRUs have been shown to perform on par with LSTMs in many tasks.

In recent years, even more complex gated architectures have been developed, such as the Transformer network. Transformers are based on a self-attention mechanism that allows the network to attend to different parts of the input sequence at each time step, making them highly parallelizable and efficient for processing long sequences.

Overall, from simple RNNs to complex gated architectures, there is a wide range of options available for modeling sequential data. Each architecture has its own strengths and weaknesses, and the choice of which to use will depend on the specific requirements of the task at hand. By understanding the differences between these architectures, researchers and practitioners can choose the most appropriate model for their needs and achieve state-of-the-art performance in a wide range of applications.

#Simple #RNNs #Complex #Gated #Architectures #Comprehensive #Guide,recurrent neural networks: from simple to gated architectures

Leave a Reply