AnyFix for Windows – One-Time Purchase/5 Devices

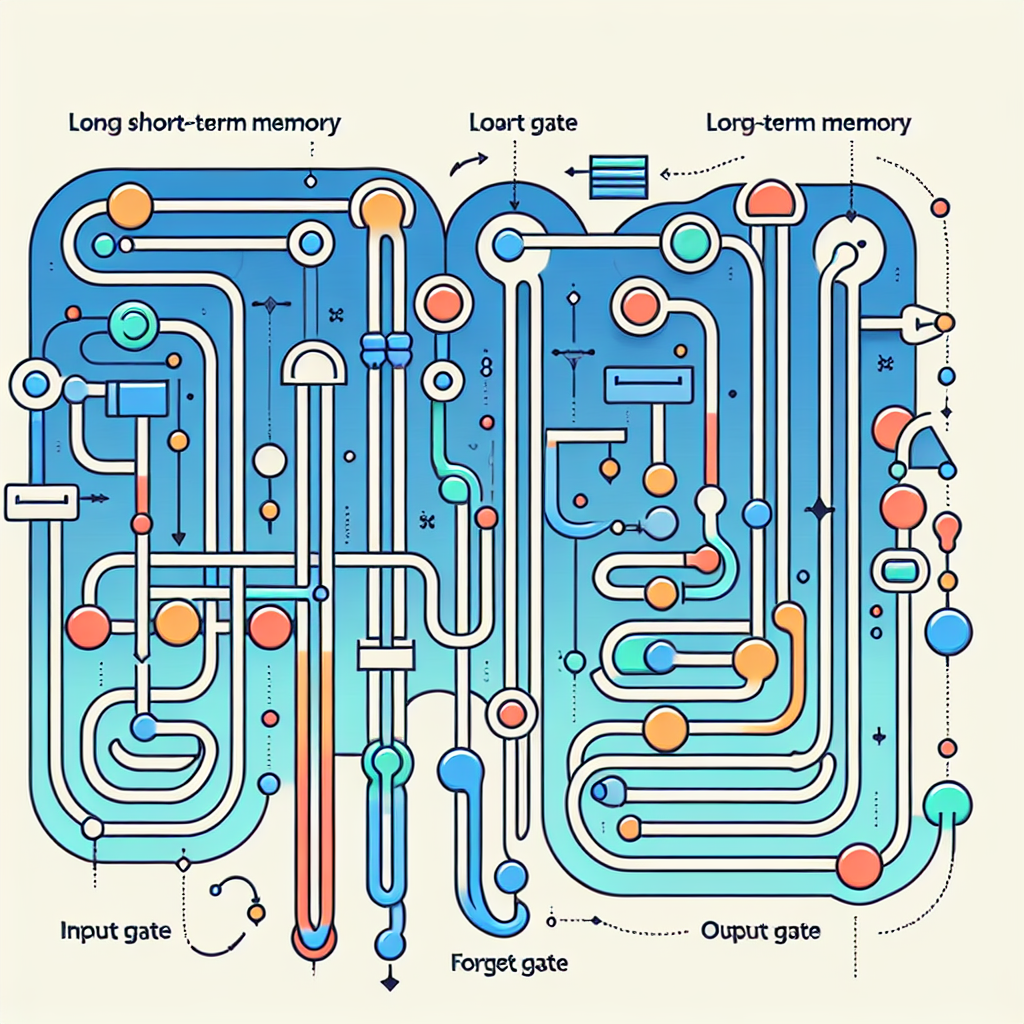

In recent years, deep learning models have revolutionized the field of natural language processing (NLP) and sequence modeling. One of the most popular and powerful neural network architectures used for sequence modeling is the Long Short-Term Memory (LSTM) network. LSTMs are a type of recurrent neural network (RNN) that are well-suited for capturing long-term dependencies in sequential data.

LSTMs were introduced by Hochreiter and Schmidhuber in 1997 and have since become a key building block in many state-of-the-art NLP models. Unlike traditional RNNs, LSTMs have a more complex architecture that includes a series of gates that control the flow of information through the network. This allows LSTMs to effectively capture long-range dependencies in sequential data, making them ideal for tasks such as language modeling, speech recognition, and machine translation.

One of the key advantages of LSTMs is their ability to remember information over long periods of time. This is achieved through the use of a memory cell that can retain information over multiple time steps, allowing the network to learn complex patterns in sequential data. This makes LSTMs particularly effective for tasks that require modeling long-range dependencies, such as predicting the next word in a sentence or generating text.

In recent years, researchers have been exploring ways to improve the performance of LSTMs for sequence modeling tasks. One approach that has shown promise is the use of attention mechanisms, which allow the network to focus on specific parts of the input sequence when making predictions. By incorporating attention mechanisms into LSTMs, researchers have been able to achieve state-of-the-art results on tasks such as machine translation and text generation.

Another area of research that has shown promise is the use of pre-trained language models to initialize the weights of LSTM networks. By leveraging large-scale language models such as BERT or GPT-3, researchers have been able to achieve significant improvements in performance on a wide range of NLP tasks. By fine-tuning these pre-trained models on specific tasks using LSTM networks, researchers have been able to achieve even better results, demonstrating the power of combining different neural network architectures for improved sequence modeling.

Overall, LSTM networks have proven to be a powerful tool for sequence modeling tasks in NLP. By leveraging their ability to capture long-range dependencies and incorporating recent advances in deep learning research, researchers have been able to achieve impressive results on a wide range of tasks. As the field of deep learning continues to evolve, it is likely that LSTM networks will remain a key building block for future advances in sequence modeling and NLP.

#Leveraging #Long #ShortTerm #Memory #LSTM #Networks #Improved #Sequence #Modeling,recurrent neural networks: from simple to gated architectures

Leave a Reply